Scan. Detect. Protect.

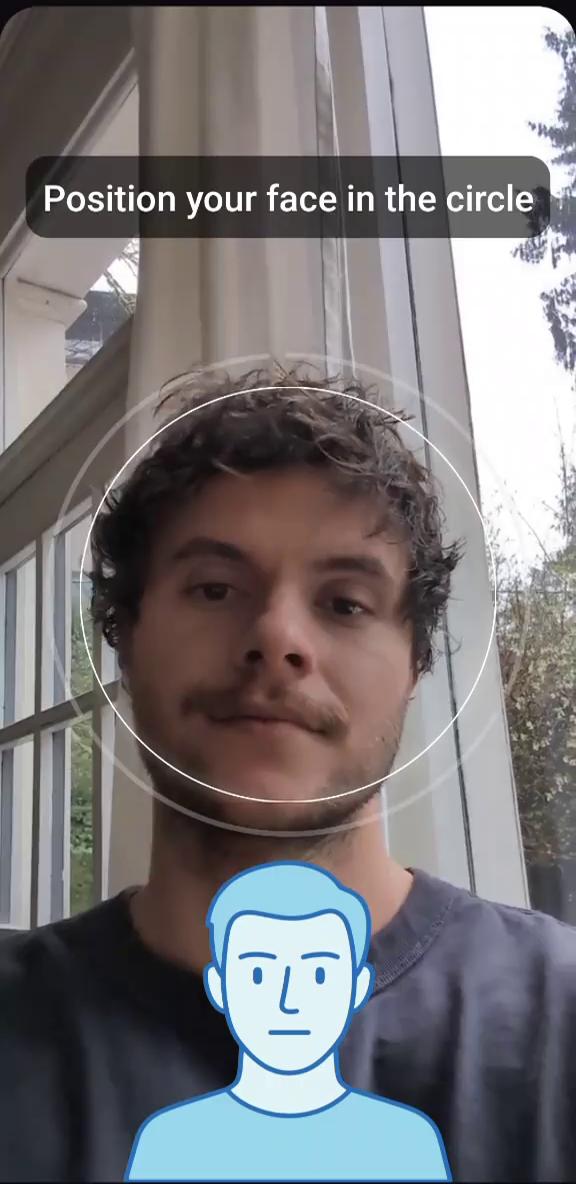

Image & Video Moderation Identify explicit sexual content, graphic violence, and more. Detect and block CSAM. Age Verification Reliable, affordable, and compliant with child safety laws. Browser, Android, and iOS integration. Behaviour Analysis Detect and ban predators in real time. Flag bots, scammers, hackers, and other bad actors.

Image and Video Moderation

Detect explicit sexual content, graphic violence, Child Sexual Abuse Material (CSAM), and more

Detect known, unknown, and AI-generated Child Sexual Abuse Material (CSAM)

100,000 scans free every month

Supports images, videos, and GIFs

Image beach_photo.jpg

Video clip_party.mp4

GIF animation.gif

99.7% ACC

< 500ms

Safe 94.2% nsfw_classifier_v3

Advanced Behaviour Analysis

Detect bots, illegal sales, and illicit promotions

Flag grooming behavior, predatory patterns, and TOS violations

Automatically trigger account reviews and enforce bans, suspensions, and more on detection

Pricing

Pay only for what you use.

$ 0.10 / 1,000 scans

- No minimum spend

- Video & GIF support

- Content moderation & age detection

- 100,000 free scans every month

Integration

Easy to integrate.

JPEGPNGGIFWebPAVIFMP4WebMMOV

REQUEST

RESPONSE